A numerical assessment that reflects the quality and reputation of the University of Arizona’s Aerospace Engineering program in comparison to other similar programs nationwide or globally. These assessments typically consider factors such as faculty expertise, research output, student selectivity, and graduate outcomes.

Such program evaluations are significant indicators for prospective students, alumni, and employers. High scores often correlate with enhanced career opportunities, increased research funding, and a stronger academic community. The historical trajectory of the program’s placement provides insight into its growth, stability, and commitment to excellence within the field.

The following sections will delve into the specific factors influencing these evaluations, present recent data points and explore how this information can inform decisions related to education and career pathways within aerospace engineering.

Interpreting Program Assessments

The numerical standings of an academic program, such as those at the University of Arizona focused on aerospace engineering, provide external validation of its strengths and areas for development. Navigating these figures effectively requires an understanding of the underlying methodologies and metrics.

Tip 1: Evaluate Ranking Criteria: Different ranking organizations employ distinct methodologies. Understanding which factors are prioritized (e.g., research funding, faculty qualifications, student placement rates) provides context to the program’s placement.

Tip 2: Consider Longitudinal Data: A single year’s assessment offers limited insight. Examine the program’s standing over several years to identify trends and determine if its performance is improving, declining, or remaining stable.

Tip 3: Compare to Peer Institutions: Benchmarking against similar programs at other universities provides a more nuanced perspective. Focus on institutions with comparable research output, student demographics, and geographical location.

Tip 4: Investigate Faculty Expertise: A high program placement often correlates with the presence of renowned faculty. Research the professors publications, research grants, and involvement in industry partnerships.

Tip 5: Analyze Student Outcomes: Explore the career paths of program graduates. Determine the types of companies they are employed by, their average starting salaries, and their progression within the field.

Tip 6: Assess Research Opportunities: A strong aerospace engineering program will provide ample research opportunities for students, allowing them to work on cutting-edge projects and contribute to the advancement of the field.

Tip 7: Examine Accreditation Status: Ensure that the program is accredited by relevant professional organizations, such as ABET. Accreditation signifies that the program meets established quality standards.

By carefully evaluating the factors contributing to a specific University of Arizona aerospace engineering program’s assessment, one can obtain a comprehensive understanding of its strengths and weaknesses.

The information presented above helps in a nuanced view of an academic program’s evaluation, forming a well-informed decision-making process.

1. Methodology Rigor

The rigor of the methodology employed in evaluating academic programs is paramount to the validity and reliability of any resulting “university of arizona aerospace engineering ranking”. The specific processes and metrics utilized directly impact the perception and utility of the ranking.

- Data Source Validation

Data used in these assessments must originate from verifiable sources. Official university reports, accreditation bodies, and reputable surveys are examples. The absence of verifiable data undermines the entire ranking process. For example, if placement rates are self-reported without independent audit, the resulting ranking becomes suspect. This has a direct and negative impact on the perceived value of a “university of arizona aerospace engineering ranking”.

- Weighting and Scoring Transparency

Ranking systems typically assign weights to different factors (e.g., research funding, student-faculty ratio, graduation rates). The rationale behind these weights, and the specific scoring formulas, should be transparently documented. Opaque weighting schemes make it difficult to assess the fairness and relevance of the “university of arizona aerospace engineering ranking”. Without it, individuals cannot assess whether they value a particular factor more or less than it is weighted in the overall ranking.

- Peer Review Integration

Some ranking methodologies incorporate peer review surveys, soliciting opinions from faculty at other institutions. While valuable, these surveys are subjective and vulnerable to bias. Rigorous methodologies mitigate bias through large sample sizes, clearly defined evaluation criteria, and statistical analysis to identify and adjust for outliers. This is critical for the fairness of the “university of arizona aerospace engineering ranking”.

- Statistical Validity

The statistical methods employed must be appropriate for the data and the research question. Simple averaging can be misleading. Regression analysis, for example, can reveal the relative importance of different factors, while controlling for confounding variables. Sound statistical techniques help ensure a “university of arizona aerospace engineering ranking” reflects actual program quality, rather than statistical artifact.

In summary, the value of any “university of arizona aerospace engineering ranking” is intrinsically linked to the rigor of its methodology. A transparent, statistically sound, and data-driven approach is essential for building confidence in the results.

2. Reputation Influence

Reputation, a critical intangible asset, exerts a substantial influence on the University of Arizona’s Aerospace Engineering program’s assessment. Esteem earned through research breakthroughs, successful alumni, and impactful industry collaborations directly translates into higher perceived program quality. This enhanced perception, in turn, often leads to more favorable evaluations from ranking bodies. A positive reputation attracts highly qualified faculty, talented students, and increased research funding, creating a self-reinforcing cycle. A university celebrated for its contributions to space exploration, for example, may see its Aerospace Engineering program benefit from the halo effect of this success. These factors contribute significantly to the program’s standing.

The effect of reputation is not solely based on concrete achievements but also on effective communication and branding. A program that actively promotes its faculty’s expertise, student successes, and research impact cultivates a stronger reputation. Maintaining consistent engagement with industry partners, participating in relevant conferences, and producing high-quality publications are strategies that build and reinforce a positive image. Conversely, negative events, such as research misconduct allegations or low graduate employment rates, can significantly damage a program’s reputation and negatively affect its ranking. For instance, an aerospace engineering program involved in a high-profile engineering failure might experience a decline in its ranking despite other strengths.

In summation, reputation serves as a crucial element in determining the overall evaluation of an Aerospace Engineering program at the University of Arizona. A strong reputation attracts resources, talent, and recognition, ultimately contributing to a higher “university of arizona aerospace engineering ranking.” Understanding the dynamics of reputation allows programs to strategically manage their image, attract stakeholders, and achieve sustained excellence. Challenges include maintaining a positive reputation amidst evolving industry demands and addressing reputational damage effectively. This understanding links back to the overarching goal of optimizing program quality and attracting top students and faculty.

3. Data Transparency

Data transparency forms a cornerstone in credible program assessments, particularly concerning the University of Arizona’s Aerospace Engineering ranking. The degree to which ranking methodologies and underlying data are accessible and understandable directly affects the trustworthiness and utility of the evaluation.

- Source Data Accessibility

The source data employed in determining the assessment should be readily accessible. If the data is proprietary or originates from sources with limited public availability, it raises concerns about potential biases and the replicability of the results. For example, if a ranking heavily relies on alumni surveys, the survey methodology, response rates, and the data itself should be made available for scrutiny. Lack of access can cause a program to appear unfairly valued for the worse.

- Methodological Disclosure

A comprehensive description of the ranking methodology, including the weighting of various criteria and the statistical techniques employed, is essential. Opaque methodologies obfuscate the factors driving the ranking and hinder the ability of stakeholders to interpret the results meaningfully. For example, if student-faculty ratio is a key criterion, the precise definition of “faculty” and the method for calculating the ratio should be clearly stated. The University benefits by the ability to show what makes it a great engineering program, so making the data transparent adds to its prestige.

- Error Reporting and Correction

Ranking bodies should have mechanisms in place for reporting and correcting errors in the data or methodology. Transparency in this process builds confidence and demonstrates a commitment to accuracy. For example, if a university identifies a data inaccuracy that affected its ranking, the ranking organization should promptly investigate and issue a corrected assessment. If there is no way to correct errors in the data, it may lead to long-term distrust in the University and its programs.

- Independent Audits

External audits of the ranking methodology and data collection processes can further enhance credibility. Independent verification provides assurance that the assessment is conducted fairly and objectively. For example, an audit by a reputable statistical organization can validate the soundness of the statistical techniques used and confirm the accuracy of the data. If the data were independently audited, the program and its rankings could be considered more respectable.

In conclusion, data transparency is not merely a desirable feature of program assessments but a fundamental requirement for ensuring their validity and usefulness. Increased transparency facilitates informed decision-making by prospective students, employers, and university administrators. Promoting transparency in the assessment of the University of Arizona’s Aerospace Engineering program strengthens its credibility and enhances its value to stakeholders.

4. Longitudinal Trends

Longitudinal trends provide critical context for understanding the significance of any specific “university of arizona aerospace engineering ranking.” A single year’s assessment offers limited insight into the true quality and trajectory of a program. Examining performance over several years reveals whether a high ranking is sustained, improving, or simply a statistical anomaly. Consistent improvement suggests a program is actively investing in its faculty, research, and student resources, demonstrating a commitment to long-term excellence. Conversely, a declining “university of arizona aerospace engineering ranking” trend warrants further investigation into potential issues such as decreased funding, faculty attrition, or curriculum stagnation. For example, if the University of Arizona’s Aerospace Engineering program has shown a steady rise in rankings over the past decade, this trend signals sustained commitment to quality and innovation, a stronger indicator than a single top-ten placement.

Analyzing longitudinal trends also allows for identifying the impact of specific strategic initiatives. Did a new research center correlate with an improvement in faculty publication rates and subsequent ranking increase? Did curriculum revisions lead to better graduate employment outcomes and improved reputation scores? These are the kinds of questions longitudinal data can help answer. Additionally, understanding these trends allows the University to proactively address potential challenges and adapt to evolving industry demands. For instance, a decline in industry-sponsored research might prompt the university to seek alternative funding sources or strengthen ties with specific companies. Therefore, observing changes over time enables a more comprehensive understanding than just a snapshot.

In summary, longitudinal trends are essential for interpreting the meaning and significance of the “university of arizona aerospace engineering ranking”. They provide valuable insights into a program’s long-term trajectory, the effectiveness of strategic initiatives, and its ability to adapt to changing circumstances. Failure to consider these trends can lead to a distorted view of a program’s true quality and potential. This historical perspective strengthens the meaning and value of any given ranking, contributing to more informed decision-making by prospective students, faculty, and administrators.

5. Peer Comparison

Peer comparison is a vital component in the assessment of the University of Arizona’s Aerospace Engineering program ranking. Analyzing the program relative to similar institutions provides essential context, revealing its strengths and weaknesses in a competitive landscape and adding depth to the understanding of its numerical placement.

- Benchmarking Against Aspirant Institutions

Identifying and comparing the University of Arizona’s program to institutions considered leaders in aerospace engineering allows for a clear understanding of areas for improvement. For example, comparing research funding levels, faculty qualifications, and graduate placement rates against institutions like MIT or Stanford offers targets for advancement and informs strategic planning. This analysis is crucial for aspiring to elevate the “university of arizona aerospace engineering ranking”.

- Evaluating Programmatic Focus and Specializations

Examining the specific research areas and specializations offered by peer institutions provides insights into emerging trends and potential areas for differentiation. If several top-ranked programs are heavily invested in areas like autonomous aerospace systems or advanced materials, this highlights areas where the University of Arizona might consider expanding its expertise to enhance its competitiveness and impact the “university of arizona aerospace engineering ranking”.

- Analyzing Resource Allocation and Investment

Comparing the resources allocated to aerospace engineering programs at different universities, including funding for research facilities, faculty support, and student scholarships, can shed light on the level of institutional commitment. Understanding how the University of Arizona’s investment compares to its peers provides context for evaluating its program outcomes and identifying potential areas for increased support that can positively influence the “university of arizona aerospace engineering ranking”.

- Assessing Student Quality and Selectivity

Analyzing the academic profiles of students admitted to aerospace engineering programs at peer institutions, including GPA, standardized test scores, and research experience, provides a measure of student quality and program selectivity. Comparing these metrics against the University of Arizonas student profile offers insights into its competitiveness in attracting top talent, a factor that significantly influences its overall standing in the “university of arizona aerospace engineering ranking”.

By conducting a thorough peer comparison, the University of Arizona can gain a more comprehensive understanding of its Aerospace Engineering program’s strengths, weaknesses, and opportunities for improvement. This analysis informs strategic decision-making and ultimately contributes to enhancing its competitiveness and strengthening its position in national and global evaluations. The insights gained support the drive to improve the “university of arizona aerospace engineering ranking” and its overall program quality.

6. Career Placement

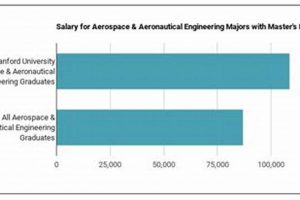

Career placement and the University of Arizona’s aerospace engineering ranking exhibit a strong correlative relationship. The ability of a program to successfully place its graduates in relevant and desirable positions directly influences its perceived value and subsequent ranking. High placement rates at reputable companies, competitive starting salaries, and alumni success stories signal a program’s effectiveness in preparing students for industry demands. These outcomes serve as tangible evidence of the program’s quality, attracting prospective students, bolstering its reputation, and contributing positively to its overall standing.

The correlation functions bi-directionally. A higher ranking often attracts a greater number of companies seeking to recruit from the program, leading to more career opportunities for graduates. This virtuous cycle reinforces the program’s attractiveness and contributes to a sustained competitive advantage. For example, if the University of Arizona’s aerospace engineering graduates are regularly hired by companies such as SpaceX, Boeing, or NASA, it reinforces the program’s reputation and positively impacts its ranking. The practical significance of this understanding lies in its use by prospective students when selecting a program that aligns with their career goals. It also serves as a key performance indicator for the university, guiding strategic decisions related to curriculum development, industry partnerships, and career services.

In conclusion, career placement is not merely an outcome of the University of Arizona’s aerospace engineering program but a critical component that shapes its perceived value and informs its ranking. The program’s ability to facilitate successful career launches for its graduates acts as a testament to its quality, attracting stakeholders, and strengthening its overall standing. Challenges involve continuously adapting the curriculum to meet evolving industry needs and effectively showcasing graduate success stories to enhance the program’s reputation. By prioritizing career placement, the university can strengthen its position in the aerospace engineering landscape and enhance the long-term success of its graduates.

Frequently Asked Questions

The following questions address common inquiries regarding the evaluation and significance of the University of Arizona’s Aerospace Engineering program assessment.

Question 1: What factors are typically considered in determining the University of Arizona Aerospace Engineering ranking?

Common factors include faculty expertise (publications, citations, research grants), research funding and output, student selectivity (GPA, standardized test scores), graduation rates, peer assessments, and career placement statistics (starting salaries, employment rates at reputable companies). The specific weighting of these factors varies among ranking organizations.

Question 2: How frequently are the University of Arizona Aerospace Engineering rankings updated?

Most major ranking organizations publish updates annually. However, the data collection and analysis periods can vary, meaning that a ranking released in a given year may reflect data from the prior year or even earlier.

Question 3: Are all “university of arizona aerospace engineering ranking” assessments equally reliable?

No. Different ranking methodologies prioritize different factors and utilize varying data sources. It is crucial to evaluate the methodology of each ranking to determine its relevance and validity for individual needs. Assessments with transparent methodologies and verifiable data sources are generally considered more reliable.

Question 4: How can one use the “university of arizona aerospace engineering ranking” to inform decisions about graduate school?

Rankings should be considered alongside other factors, such as program specializations, research opportunities, faculty expertise in specific areas of interest, location, and cost. A high ranking does not guarantee a perfect fit for every individual. Prospective students should research programs thoroughly and consider their personal priorities.

Question 5: Does a decline in the University of Arizona Aerospace Engineering ranking necessarily indicate a decline in program quality?

Not necessarily. A decline in ranking could result from changes in the ranking methodology, increased competition from other programs, or temporary fluctuations in specific metrics (e.g., research funding). A comprehensive assessment requires examining longitudinal trends and considering multiple data points, not relying solely on a single year’s ranking.

Question 6: How does the University of Arizona Aerospace Engineering program leverage ranking feedback for continuous improvement?

The University analyzes ranking methodologies and results to identify areas for strategic investment and improvement. This may include enhancing faculty recruitment, increasing research funding, strengthening industry partnerships, or refining the curriculum to better meet industry needs. Ranking data serves as one input into a broader continuous improvement process.

In summary, these FAQs aim to provide a clearer understanding of how “university of arizona aerospace engineering ranking” works, emphasizing the importance of critical evaluation and multifaceted decision-making.

The next section will explore potential future trends affecting Aerospace Engineering programs and their respective standings.

Conclusion

The preceding exploration of the “university of arizona aerospace engineering ranking” has illuminated the multifaceted nature of program evaluation. The assessment process relies on methodological rigor, reputational influence, data transparency, longitudinal trends, peer comparisons, and career placement metrics. Understanding these interconnected elements is crucial for interpreting and utilizing ranking data effectively.

The ongoing evolution of the aerospace engineering field, coupled with dynamic changes in ranking methodologies, necessitates continuous adaptation and strategic planning. Stakeholders should adopt a nuanced perspective, recognizing that any single assessment represents a snapshot in time, reflecting specific criteria and data sources. The ultimate value lies in a comprehensive understanding of the factors that contribute to program quality and the informed decisions that result.